FTM workshop

Publisert

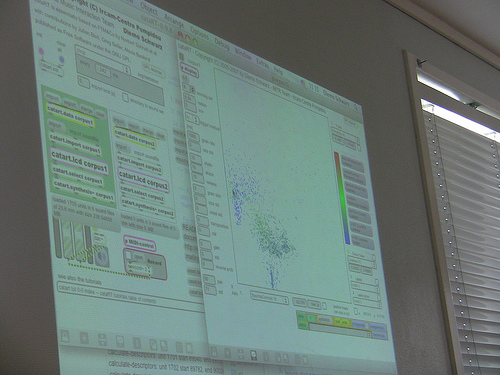

The basic idea of FTM is to extend the data types exchanged between the objects in a Max/MSP patch by complex data structures such as matrices, sequences, dictionaries, break point functions, tuples and whatever might seem helpful for the processing of music, sound and motion capture data. It also comprises visualization and editor components, and operators (expressions and externals) on these data structures, together with file import/export (SDIF, MIDI, …) operators.

As examples of applications in the areas of sound analysis, transformation and synthesis, gesture following, and manipulation of musical scores, we will look at the parts and packages of FTM that allow arbitrary-rate signal processing (Gabor), matrix operations, statistics, machine learning (MnM), corpus-based synthesis (CataRT), sound description data exchange (SDIF), and Jitter support. The presented concepts will be tried and confirmed by applying them to programming exercises of real-time musical applications, and free experimentation.

The workshop ends with a live event/concert at Landmark on the evening of Friday March 13, featuring Diemo Schwarz, Vicotria Johnson (electric violin) and the South-African sound artist James Webb.

The workshop is led by Diemo Schwarz.

Biography:

Diemo Schwarz is a researcher—developer in real-time applications of computers to music with the aim of improving musical interaction, notably sound analysis—synthesis, and interactive corpus-based concatenative synthesis.

Since 1997 at Ircam (Institut de Recherche et Coordination Acoustique—Musique) in Paris, France, he combined his studies of computer science and computational linguistics at the University of Stuttgart, Germany, with his interest in music, being an active performer and musician. He holds a PhD in computer science applied to music from the University of Paris, awarded in 2004 for the development of a new method of concatenative musical sound synthesis by unit selection from a large database. This work is continued in the CataRT application for real-time interactive corpus-based concatenative synthesis within Ircam’s Real-Time Music Interaction (IMTR) team.

Links:

http://recherche.ircam.fr/equipes

http://recherche.ircam.fr/equipes/analyse-synthese/schwarz/mtbf/

The workshop is supported by The Norwegian Art Council and The Municipality of Bergen.